The Prospect of Human-Robot Intimacy: A Comparative Study on Robotic Companionship

Introduction

Background & Motivation

With the rise of LLMs (like ChatGPT) and lifelike social robots (e.g., Erica, MiRo-E), the boundary between "mechanical tools" and "emotional companions" is blurring. This study explores whether these artificial entities can truly augment human life quality or if they merely offer a "simulated" intimacy.

Literature Review: From Sci-Fi to Reality

The research traces the evolution of HRI—from fictional droids like R2-D2 to contemporary "hugging robots" and AI-driven apps. While technology enhances emotional connectivity, a critical gap remains: current robots often lack responsive empathy, leading to "awkward" interactions despite their human-like appearance.

The Trust Paradox

As robots become more anthropomorphic, humans naturally develop stronger emotional attachments (Turkle, 2011). However, these bonds are fragile. The study identifies a lack of exploration into how anthropomorphism specifically influences the formation of trust in intimate human-robot relationships.

Research Question

To what extent can robotic companions replicate or substitute the trust experienced in intimate relationships with human partners?

Methodology & Prototyping

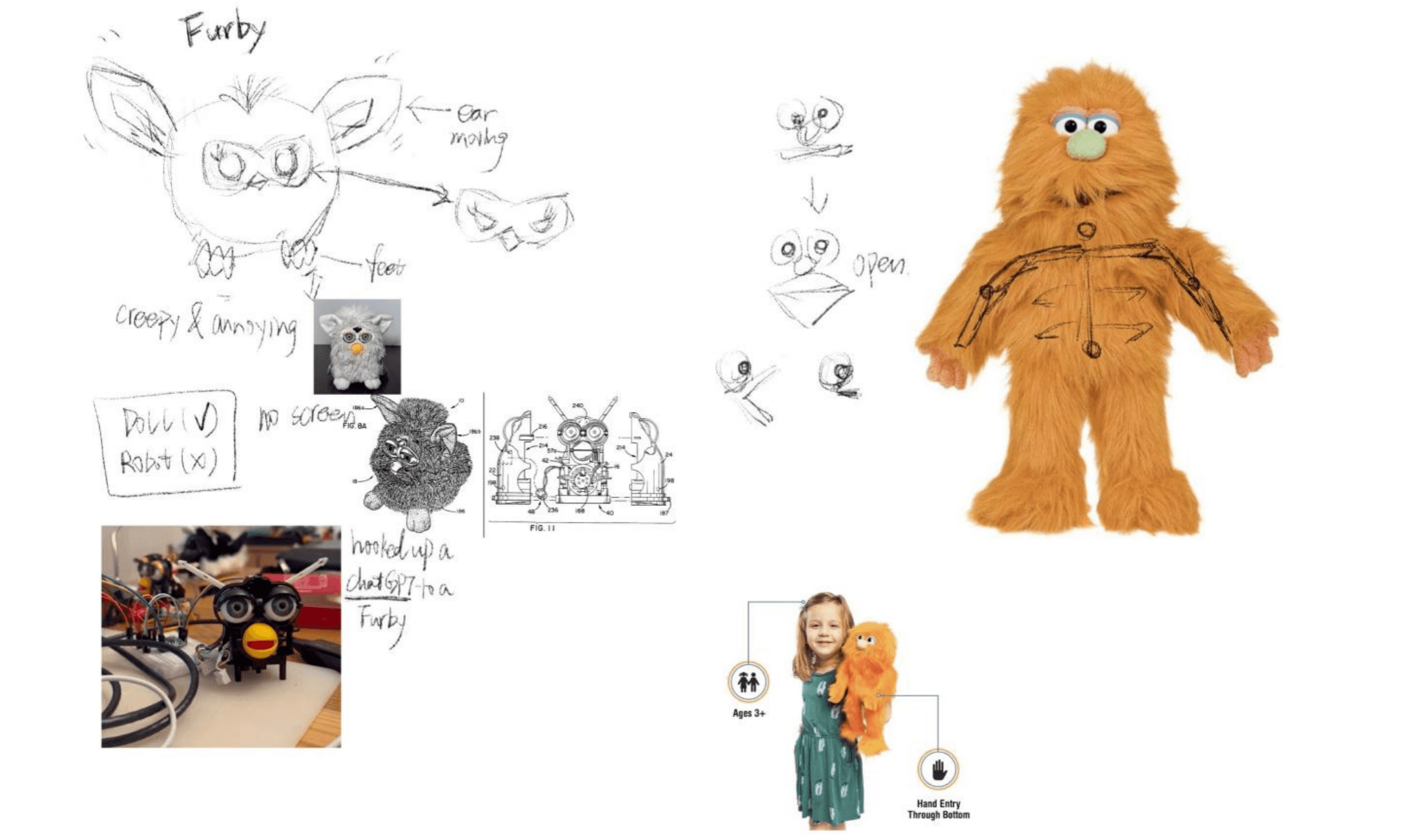

Design Strategy: Soft Interaction

To challenge the "cold and hard" stereotype of traditional robotics, I developed a furry, soft-textured puppet robot. This tactile approach aims to enhance emotional comfort and evoke stronger attachment responses, similar to a child's bond with a cherished toy.

Technical Implementation

The prototype integrates an Arduino-controlled servo system within the puppet to facilitate mouth movements and interactive gestures. Intelligent, real-time conversational capabilities were achieved through ChatGPT’s voice API, creating a seamless HRI experience.

Experimental Design

The study utilized structured questionnaires based on the Rempel Trust Scale to compare participant trust levels across three dimensions: Predictability, Dependability, and Faith, evaluating how "robotic intimacy" measures up against real human partnerships.

Experiment Design & User Testing

User Recruitment

Conducted user testing with 15 participants (aged 18-30) across diverse demographics. All participants possessed a baseline understanding of AI and robotics to ensure informed interaction.

The "Trust Scale" Methodology

Utilized the Rempel & Holmes Trust Scale (17 items, 7-point Likert scale) to quantify trust across three dimensions: Predictability (consistency), Dependability (risk-handling), and Faith (emotional responsiveness).

Comparative Procedure

A controlled "Pre-test vs. Post-test" procedure was conducted in a park setting:

Baseline: Participants first assessed their trust in a real human partner based on past experiences.

Interaction: A 5-minute deep conversation with the ChatGPT-powered robotic puppet.

Evaluation: Participants completed the same scale reflecting on their interaction with the robot.

Results: Data Visualization & Key Findings

Comparative Overview

The data visualization revealed a distinct "Trust Profile" for both entities. Participants showed a significantly larger variance in trust toward human partners, while trust levels toward the robotic friend remained within a narrower, more concentrated range.

Dimensional Breakthrough

Faith (F) - The Win for Robots:

Unexpectedly, the robotic companion scored notably higher in the Faith dimension (Questions Q2, Q3). This suggests that users feel "safer" and more emotionally supported by the robot, as they do not fear being judged by moral standards or societal norms.

Predictability (P) - The Bottleneck

The robot scored significantly lower in Predictability, even reaching negative values (Questions Q4, Q5, Q6). While humans are perceived as having consistent social "mental models," the robot's simulated behaviors were often seen as harder to anticipate in complex social contexts.

Dependability (D) - Reliability & Deceit

Humans outperformed robots in handling emergent or unforeseen events (Q1, Q7). However, when it came to honesty and commitment (Q13, Q17), the robot was rated as more trustworthy because it lacks the "human capacity" to produce well-intentioned lies or deceitful excuses.

Data Limitations & Self-Reflection

I acknowledge the limitations of this study: the small sample size (15 participants), and the use of a 5-minute short-term interaction. These results provide a snapshot of early-stage emotional bonding rather than long-term relationship dynamics.

Discussion: Unpacking the HRI Trust Dynamics

A. The Paradox of Faith: Freedom from Judgment

Robots scored higher in the Faith dimension because they lack moral agency. Unlike humans, robots do not "judge" or feel "disappointed." This obliviousness creates a safe emotional space where users feel free from shame, though it remains a non-reciprocal relationship. As Sherry Turkle suggests, robots act as "relational artifacts" that trigger our biological buttons without true consciousness.

B. The "Black Box" of Predictability

Humans are more predictable because we share societal "mental models" and ethical frameworks. In contrast, robots can express emotions without comprehending their significance. This "meaning gap" makes their behavior harder to anticipate in complex scenarios. However, the study found robots are perceived as more predictable in avoiding conflicts, likely due to their limited social exposure and passive nature.

C. Reliability vs. Honesty: The "Coded Integrity

While humans excel in handling emergent situations through intuition, they are also prone to "well-intentioned lies" driven by personal interest. Robots, governed by predefined code, lack the capability to deceive. This leads to a paradoxical conclusion: humans are more dependable in a crisis, but robots are more honest in their programmed commitments.

Conclusion: Redefining Companionship

Key Discovery: The Trust Paradox

The study reveals a surprising reality: trust scores for robotic companions are comparable to, and in some aspects (e.g., Faith), even higher than those of human partners. This is attributed to the robot's "programmed innocence"—its inherent consistency, obedience, and lack of social judgment or deceptive intent, which offers a unique form of emotional security.

Ethical & Social Challenges

While robots excel in simulated predictability, they challenge the traditional essence of companionship. The non-reciprocal nature of these bonds raises critical questions: How will the widespread acceptance of robotic intimacy reshape human social structures, reproduction, and our very capacity for human-to-human vulnerability?

Future Vision

The path toward human-robot intimacy is fraught with complexity. Moving forward, the goal is not merely to enhance "Emotional Intelligence" in AI, but to strike a balance between technological innovation and ethical preservation. We must ensure that robots serve to enhance, rather than detract from, the richness of the human condition.

date published

15 Mar 2022

reading time

5 min

my role

Independent UX Researcher

mian tasks

1. Self-Initiated Research Design 2. Comprehensive Literature Synthesis 3. Theoretical Modeling & Analysis 4. Risk & Opportunity Assessment